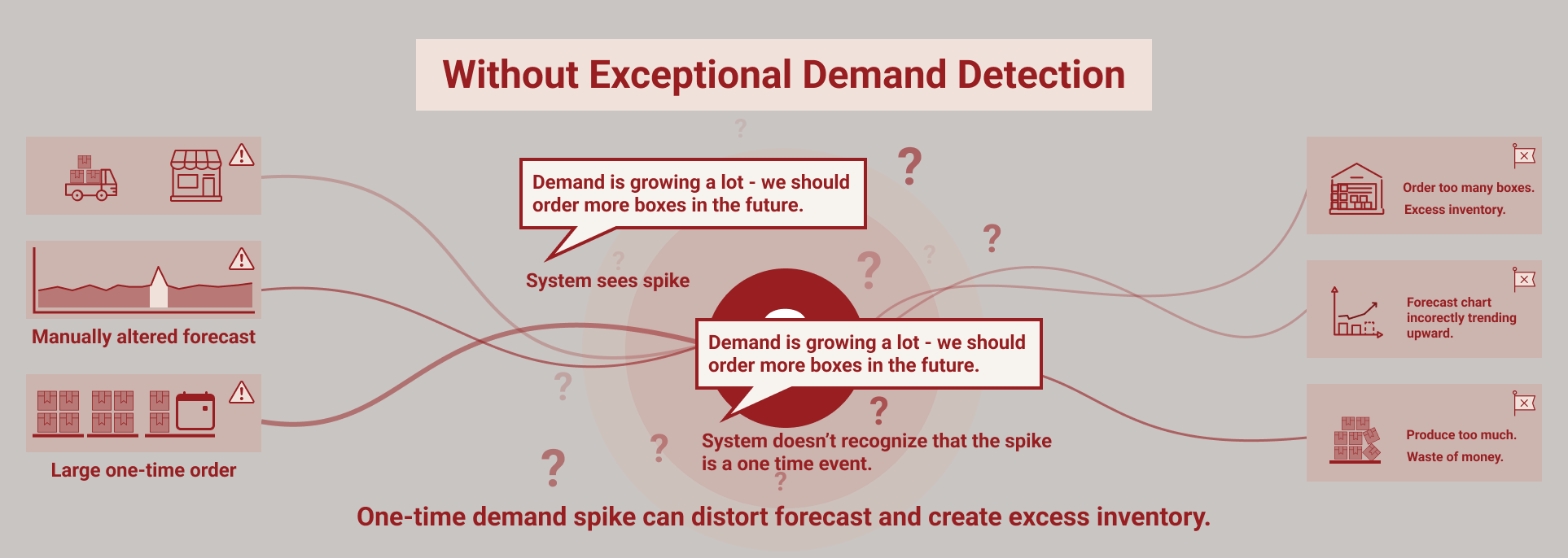

Accurate forecasting depends on understanding what demand truly represents.

Yet in most supply chains, demand data contains temporary spikes, exceptional orders, and one‑off events that do not reflect normal buying behavior. When these anomalies are not handled correctly, they distort forecasts and introduce noise into planning decisions.

As product portfolios grow and demand becomes more volatile, manual review of these exceptions becomes increasingly difficult.

Why exceptional demand is hard to manage

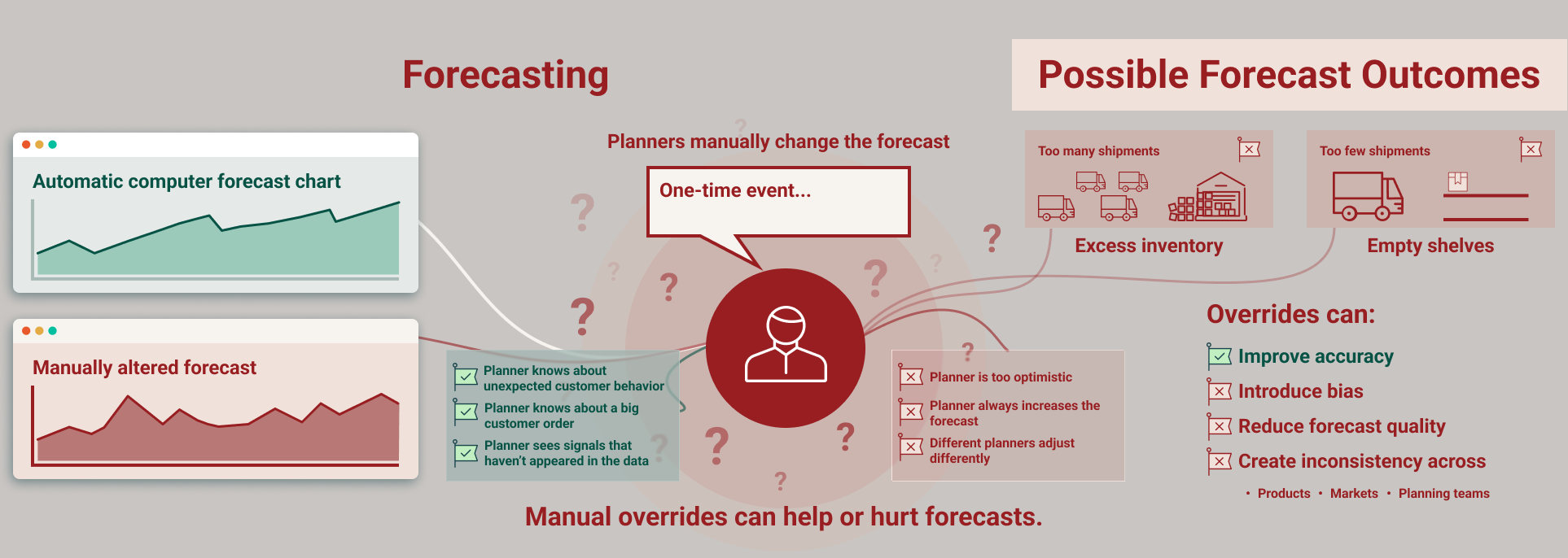

One‑time events can come from many sources: project orders, promotions, panic buying, or short‑lived customer behavior. In traditional planning environments, identifying these outliers often depends on individual planners manually reviewing demand history and deciding what should influence the forecast.

This approach does not scale. Decisions become inconsistent, subjective, and time‑consuming. Some anomalies slip through unnoticed, while others are handled differently across products, customers, and regions.

From manual review to automated anomaly detection

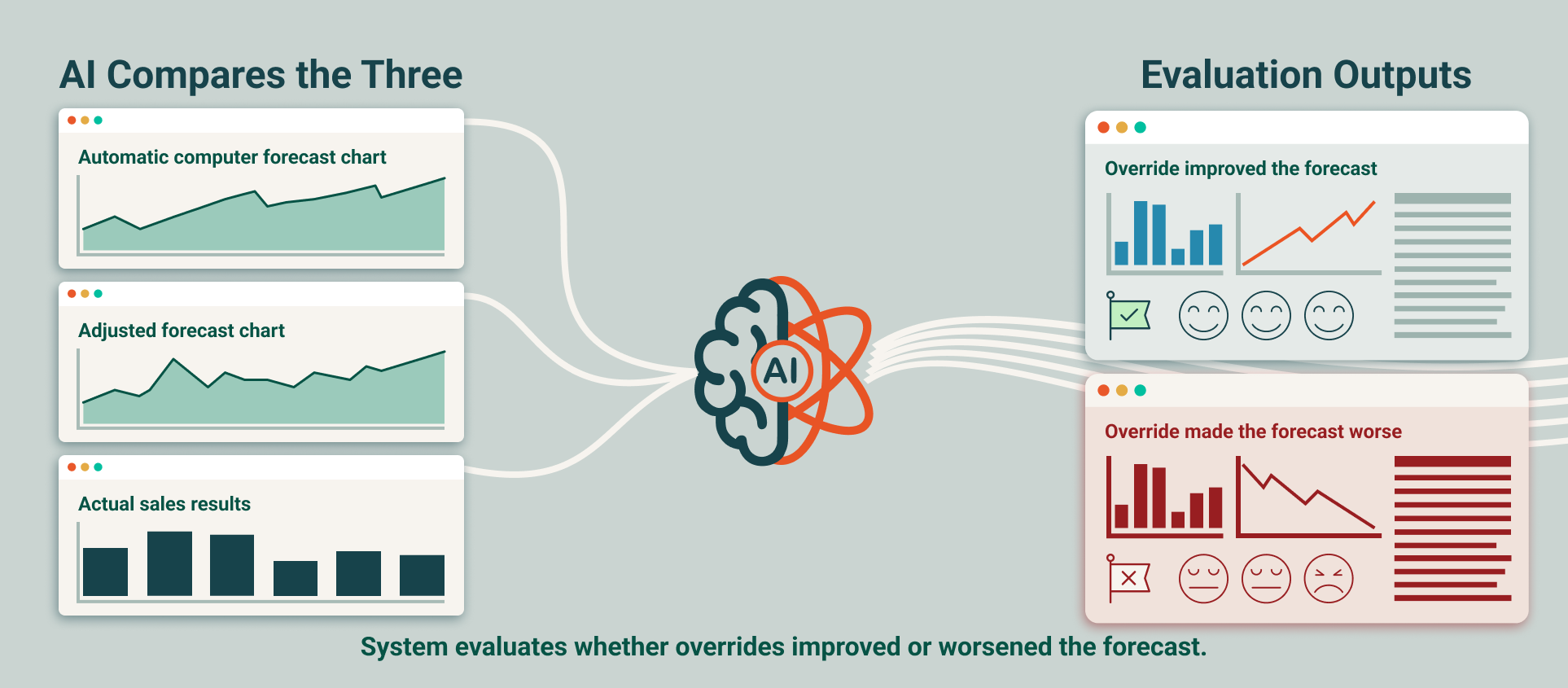

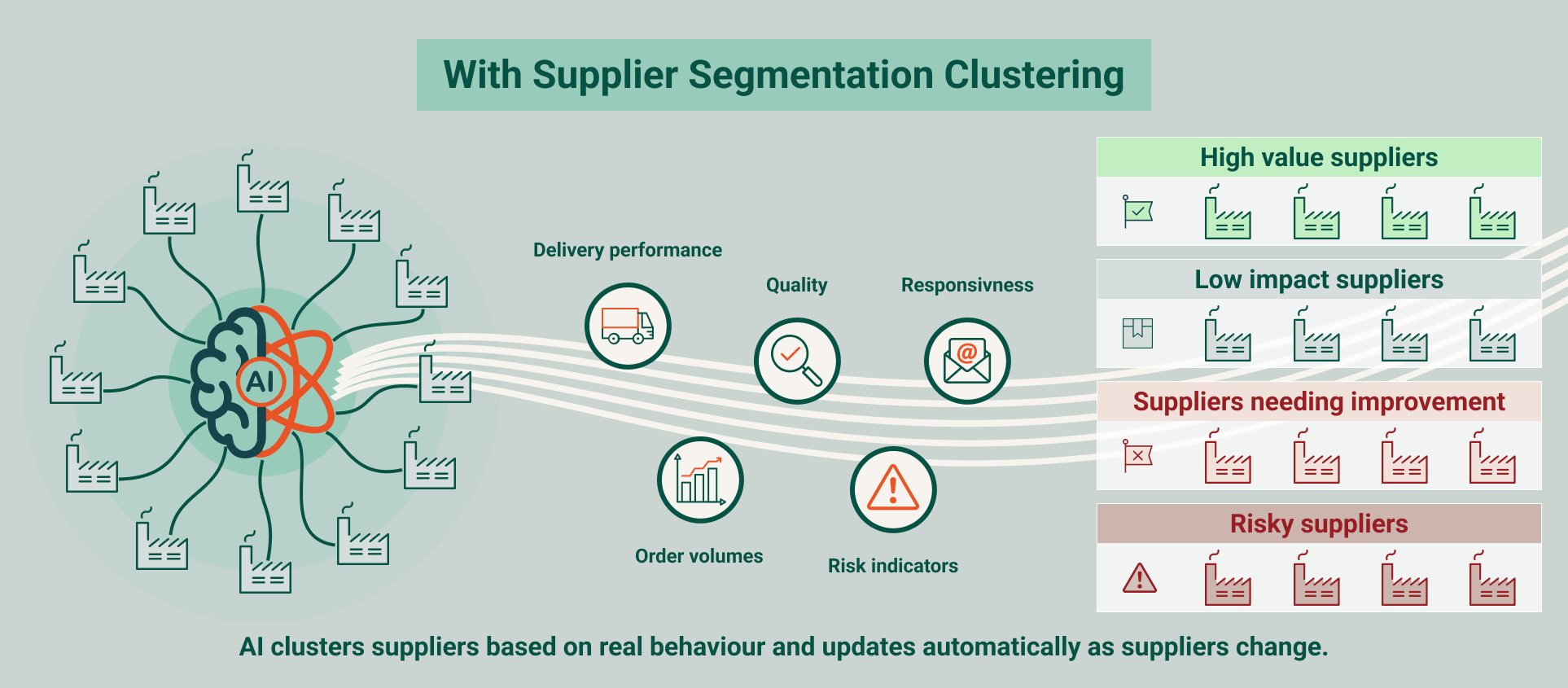

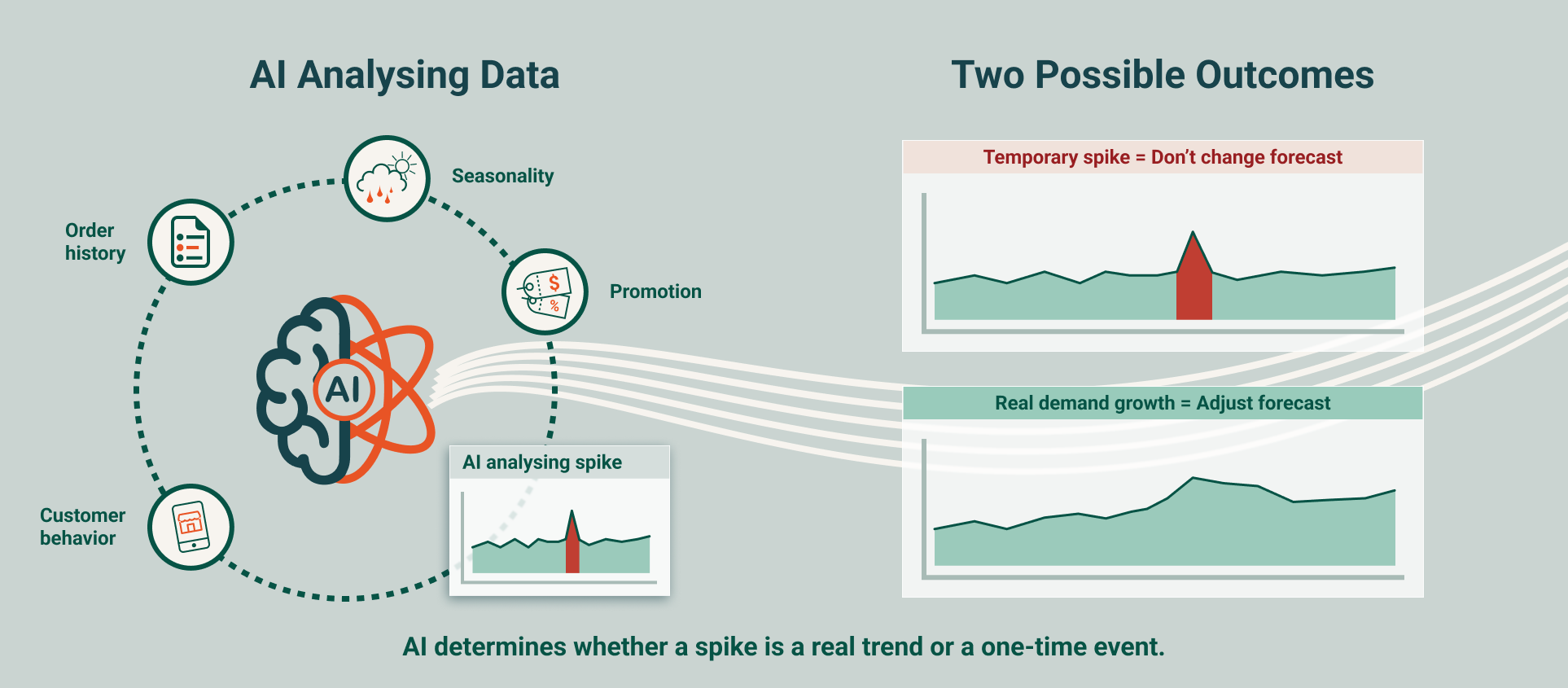

AI‑driven exceptional demand detection introduces an automated layer that continuously evaluates demand behavior. Machine learning models learn what “normal” looks like for each product and customer by analyzing historical patterns, seasonality, lifecycle effects, and buying behavior over time.

When demand deviates meaningfully from expected patterns, the system identifies whether the change represents a genuine shift or a temporary anomaly that should not influence the baseline forecast.

How automated detection supports planners

- Identifies one‑time demand spikes before they distort forecasts

- Separates temporary anomalies from real demand changes

- Reduces the need for planners to manually inspect demand history

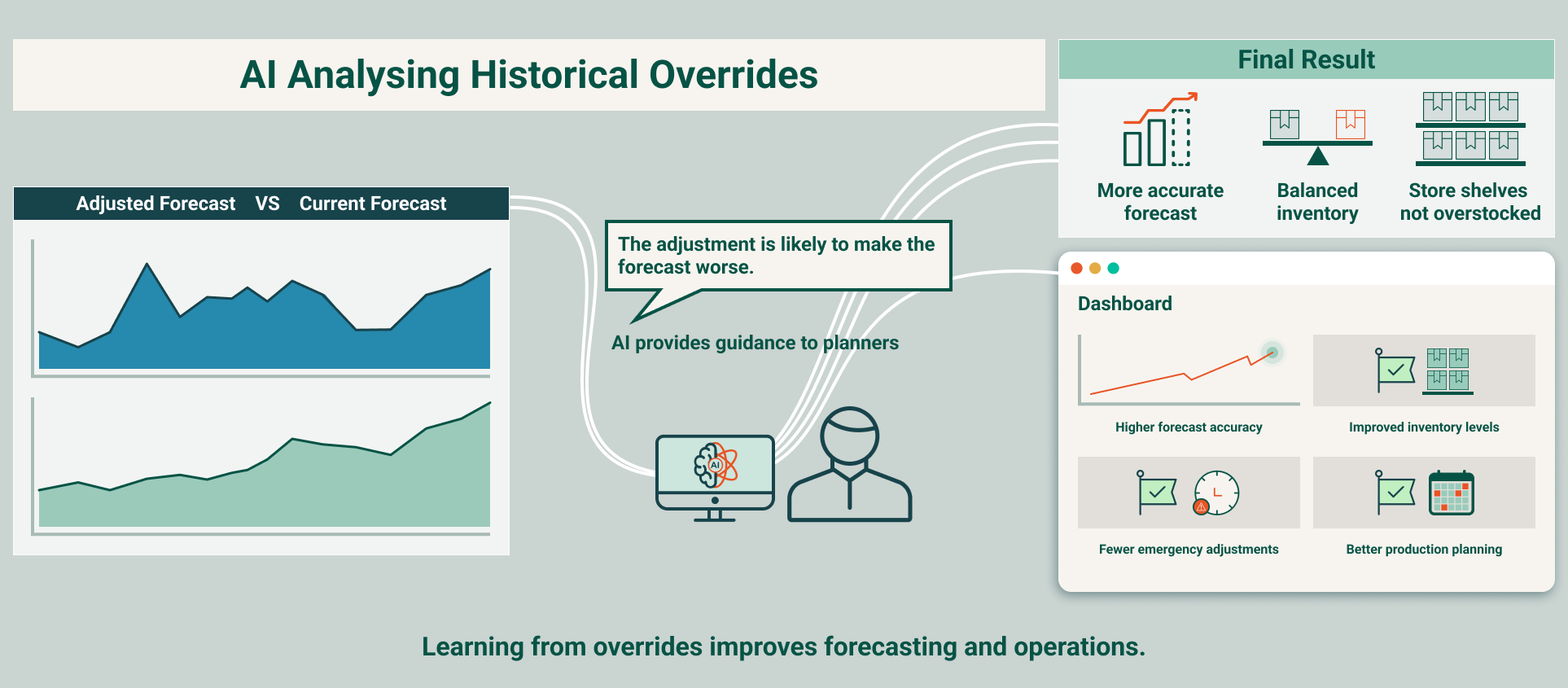

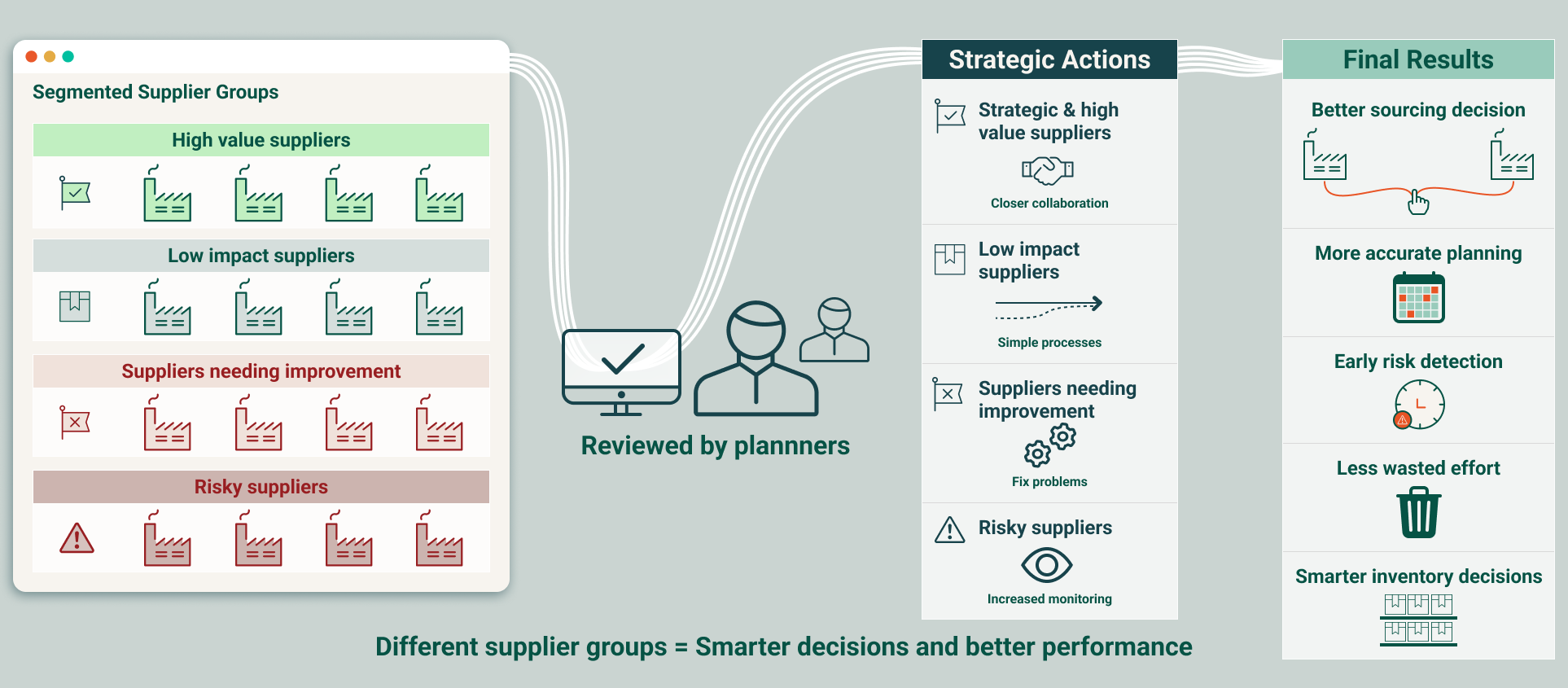

Planners are involved only when validation or context is needed, allowing them to focus on analysis instead of data cleanup.

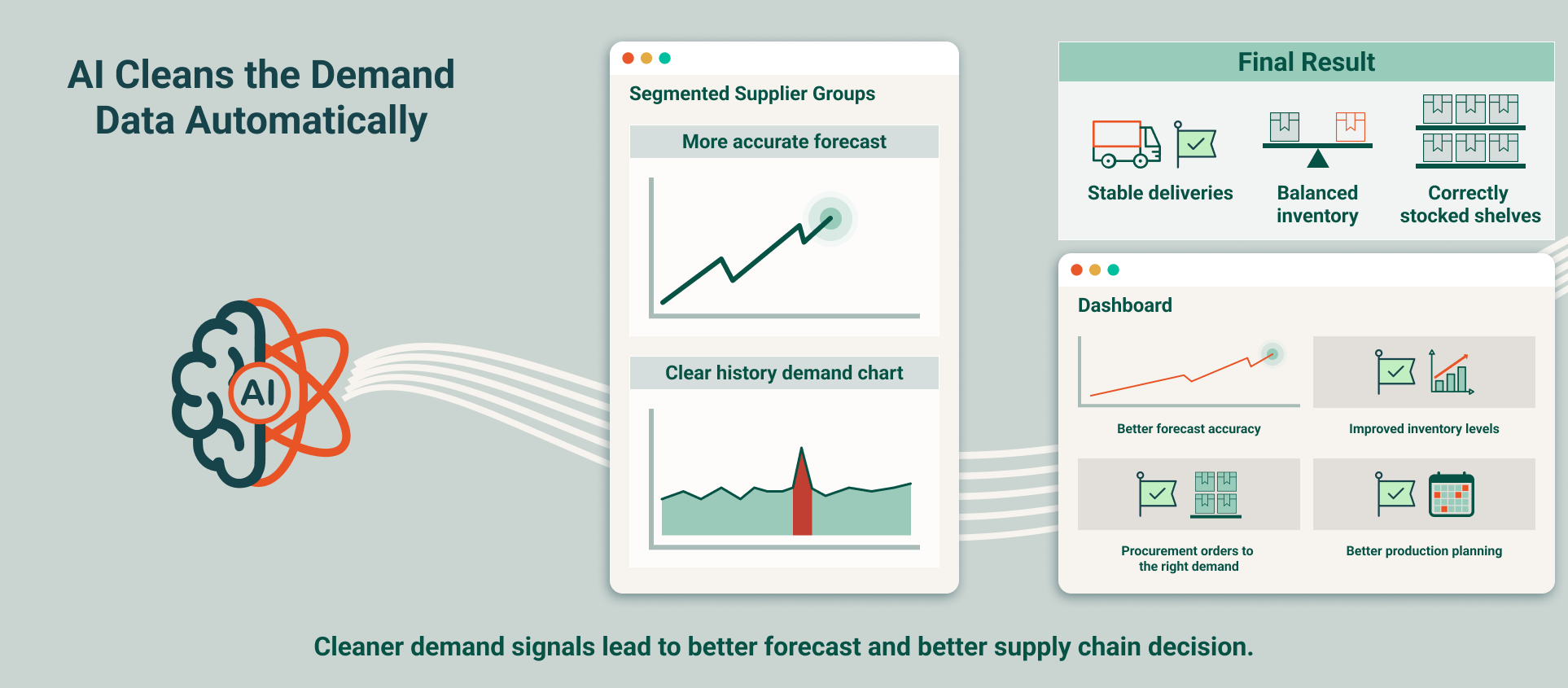

Cleaner inputs lead to calmer planning

With anomalies handled consistently, forecasting models work with cleaner and more stable input data. This improves forecast reliability and reduces the overreaction that often follows temporary demand spikes.

Downstream processes also benefit. Inventory levels are better aligned with real demand, production schedules become more stable, and procurement avoids ordering excess materials driven by short‑term noise. The entire planning process becomes more predictable and easier to manage.

Measurable impact

- Higher forecast accuracy through cleaner demand history

- Reduced manual workload for planners

- More consistent handling of demand anomalies across teams

- Improved inventory and production alignment

Want to learn more?

With 20 + years of experience and more than 1,000 successful projects, Optilon helps companies design supply chains that work and keep improving.

Book a meeting with a supply chain expert to explore how predictive demand sensing can improve forecast accuracy, reduce demand uncertainty, and strengthen customer insights.